Resources

Cognitive Ability Test

Everything you ever wanted to know about cognitive ability testing

Home » Platform » Assessments » Cognitive ability test

Table of contents:

What is a cognitive ability test?

What does a cognitive ability test measure?

Types of cognitive ability test:

What ability tests are available on the my.clevry platform?

How does a cognitive ability test work?

Developing high-level cognitive tests

Verifying test scores for candidates

Assessing cognitive ability accessibly

How is a cognitive ability test scored?

What is a Cognitive Ability Test?

Cognitive ability tests provide a standardised way of measuring an individual’s performance in different work related tasks or situations. They measure potential rather than just academic performance, and are frequently used by employers as indicators of how people will perform in a work setting.

Ability tests provide valuable insight in to a candidate’s ability to process information whilst working within a time limit. They are a good predictor of job performance and when used alongside personality questionnaires, help to provide a more well-rounded picture of an individual at work.

The results from a cognitive ability test shows recruiters how a candidate has performed in comparison to a diverse range of other candidates. This allows recruiters to more easily gauge and assess the traits that correlate with success in a specific role, make better recruitment decisions and hire more efficiently.

High reliability

Cognitive ability tests demonstrate high reliability, meaning that individuals tend to achieve similar scores when taking the same test on multiple occasions. This reliability allows for consistent measurement of cognitive abilities over time.

Predicitve validity

Cognitive ability tests have been shown to be highly predictive of future job performance. Research suggests that individuals who perform well on cognitive ability tests tend to excel in complex problem-solving, learning new tasks quickly, and adapting to changing work environments.

Culturally neutral

Cognitive ability tests are designed to be culturally neutral, meaning that they aim to assess cognitive abilities without being influenced by cultural background or language proficiency. This allows for fair and unbiased assessment across diverse populations.

What does a cognitive ability test measure?

Cognitive ability tests are designed to assess and measure an individual’s cognitive ability.

These tests aim to measure various aspects of a person’s intellectual potential, such as their problem-solving skills, speed, learning capacity, and general cognitive functioning.

Clevry’s cognitive ability tests typically measure:

Verbal Reasoning: This assesses a person’s ability to understand and manipulate words, language, and verbal information.

Numerical Reasoning: Measures a person’s proficiency in working with numbers, mathematical operations, and understanding numerical patterns.

Mechanical Reasoning: Helps to measure an individual’s ability to visualise and mentally manipulate shapes, levers, objects, and spatial relationships.

Abstract Reasoning: Also known as non-verbal reasoning, measures a person’s ability to recognise patterns and relationships in visual stimuli without relying on language or prior knowledge.

Checking Test: This assesses how quickly an individual can perform simple cognitive tasks, such as matching symbols or identifying patterns.

It’s important to note that while cognitive ability tests provide valuable information, they are just one aspect of understanding an individual’s overall abilities and potential. Other factors, such as motivation, personality traits, and emotional intelligence, also play significant roles in determining a person’s success and performance in various tasks and settings.

Types of Cognitive Ability Test

We offer five different types of cognitive ability test; numerical, verbal, checking, abstract and mechanical.

Ability:

Numerical

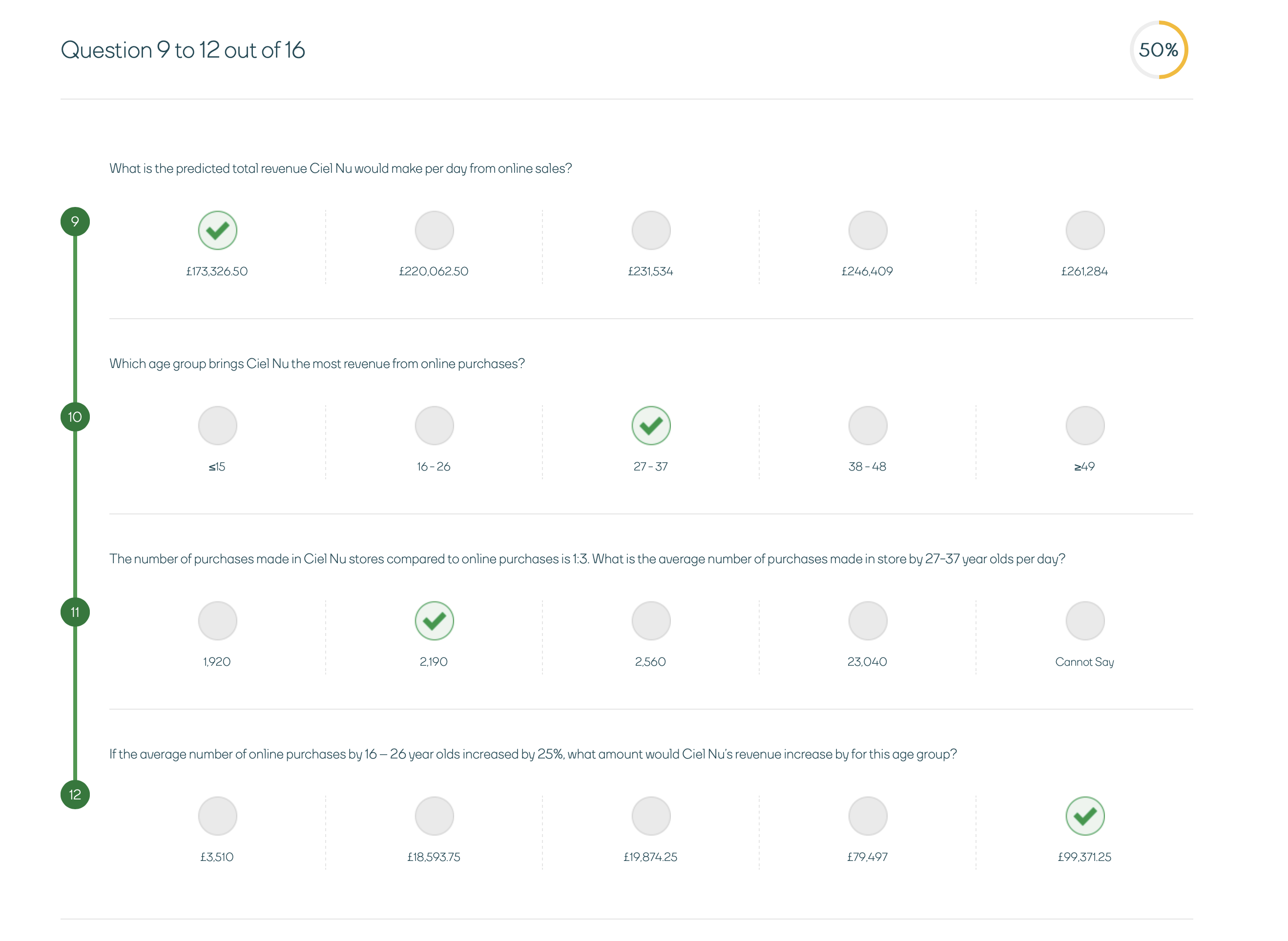

Numerical cognitive ability tests measure high level numerical critical reasoning, they will require candidates to analyse and manipulate numerical data.

Results from numerical reasoning tests have been found to be predictive of performance in roles that require numerical reasoning; such as working with analytics data, understanding and manipulating numerical information, working with financial data or performing cost calculations.

Ability:

Verbal

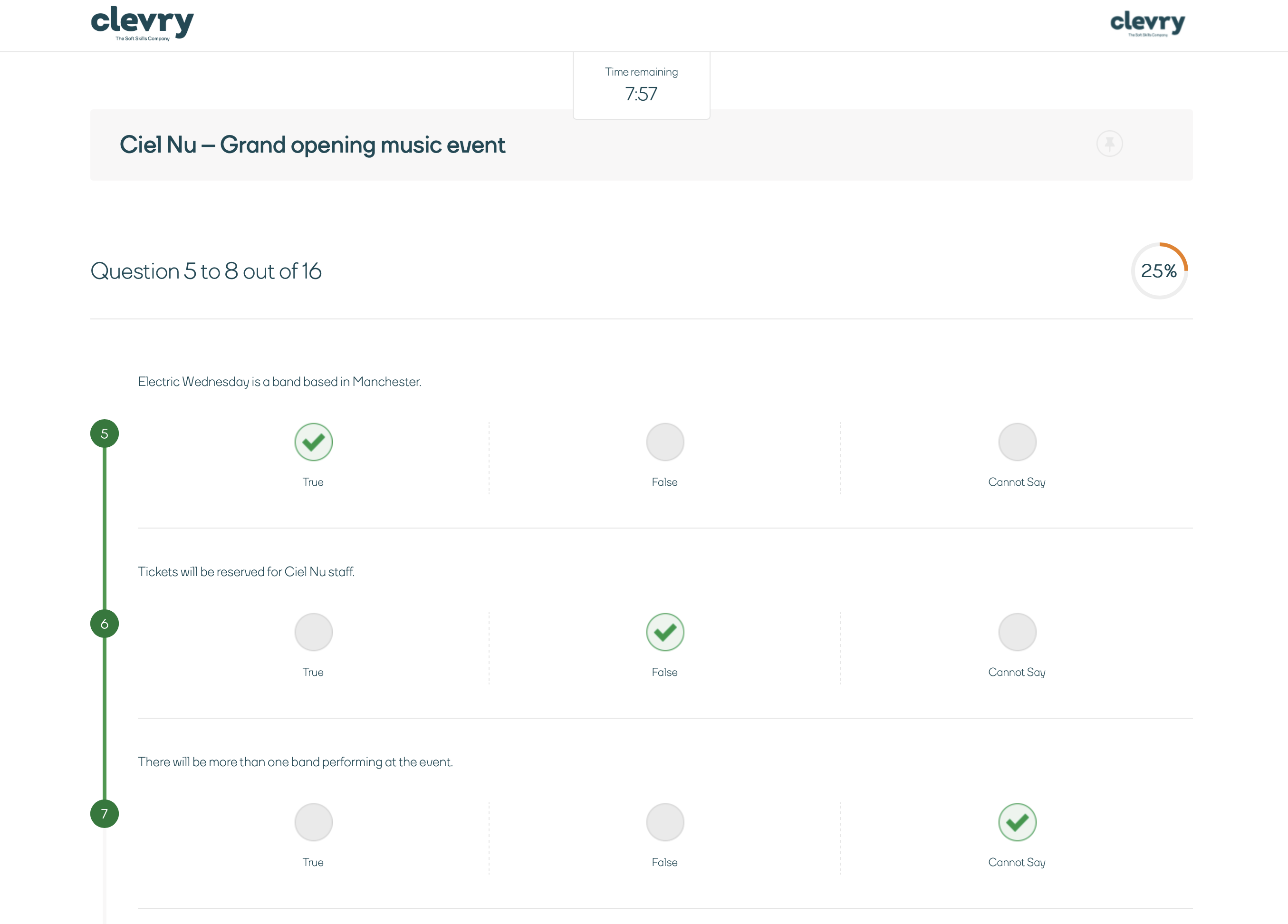

Verbal cognitive ability tests measure high-level verbal critical reasoning, requiring candidates to comprehend and evaluate meaning using precise logical thinking.

Results from verbal reasoning tests have been found to be predictive of performance in roles that require regular use of verbal reasoning skills; for example analysing and making judgements about complex written material in things such as reports, proposals, letters and emails or other documents.

Ability:

Checking

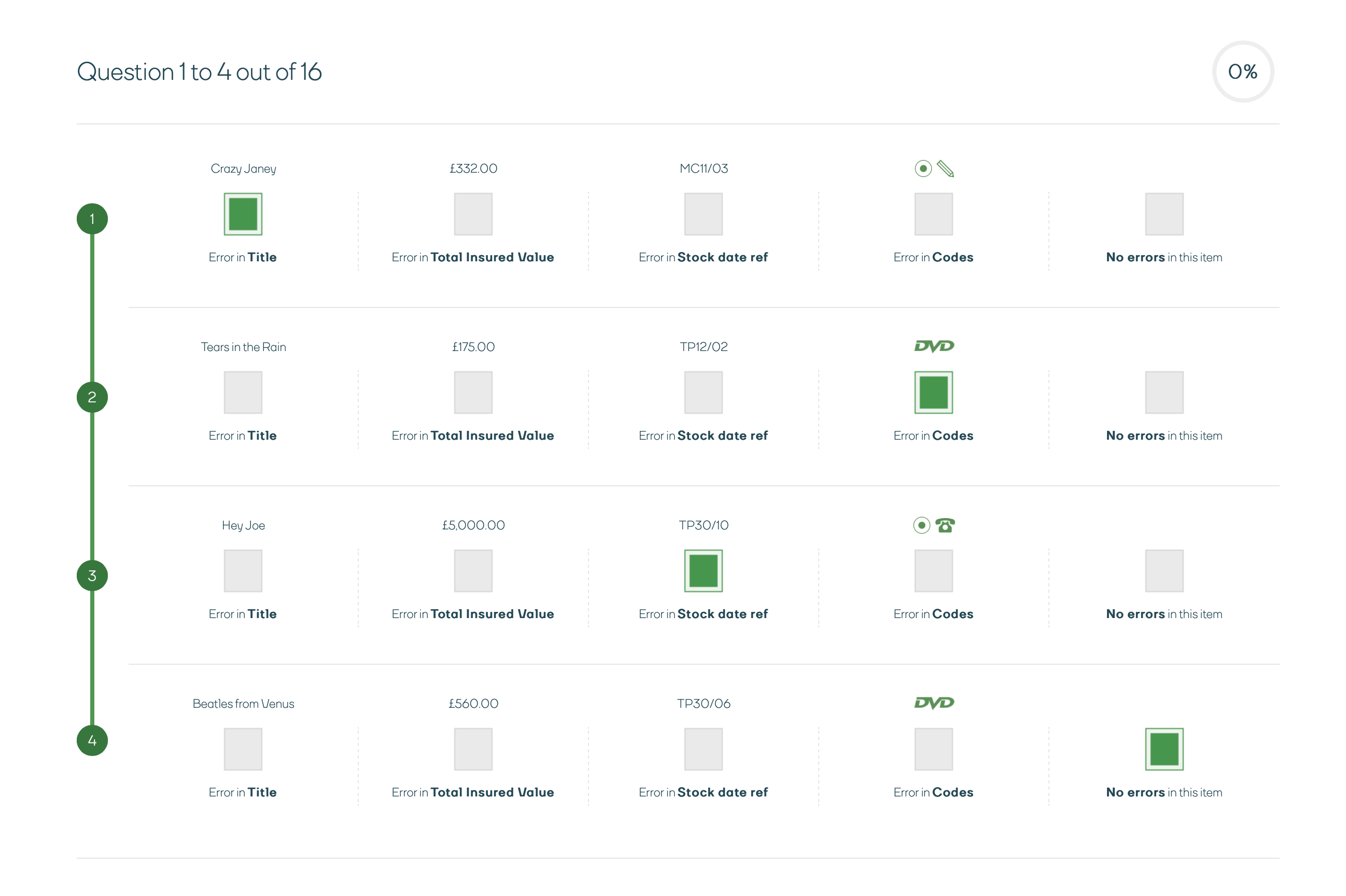

The Checking Test is designed to assess the candidate’s aptitude for spotting errors across different sets of information.

Checking ability tests allow recruiters to assesses a candidate’s aptitude for attention to detail and spotting errors in written information both quickly and accurately under timed conditions.

Ability:

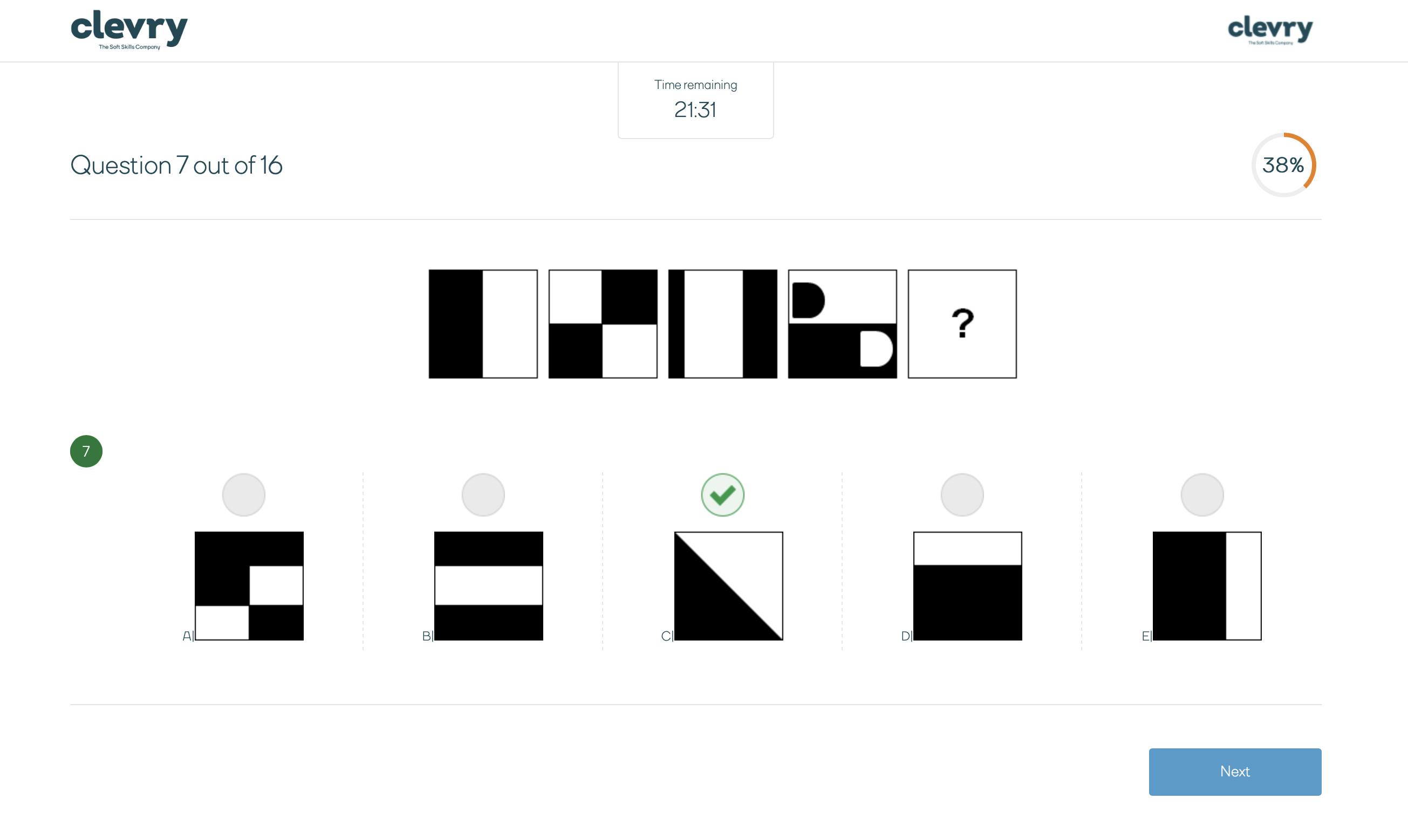

Abstract

An abstract cognitive ability test measures high level abstract reasoning, and will require candidates to work out rules or laws from a series of abstract diagrams. This test is designed to measure a candidate’s ability to identify and manipulate logical patterns found within visual information.

Results from abstract reasoning tests have been found to be predictive of performance in roles that require the demands of abstract reasoning; such as working with complex data or concepts and applying asystems thinking approach to identifying relationship patterns and trends in organisational data.

Ability:

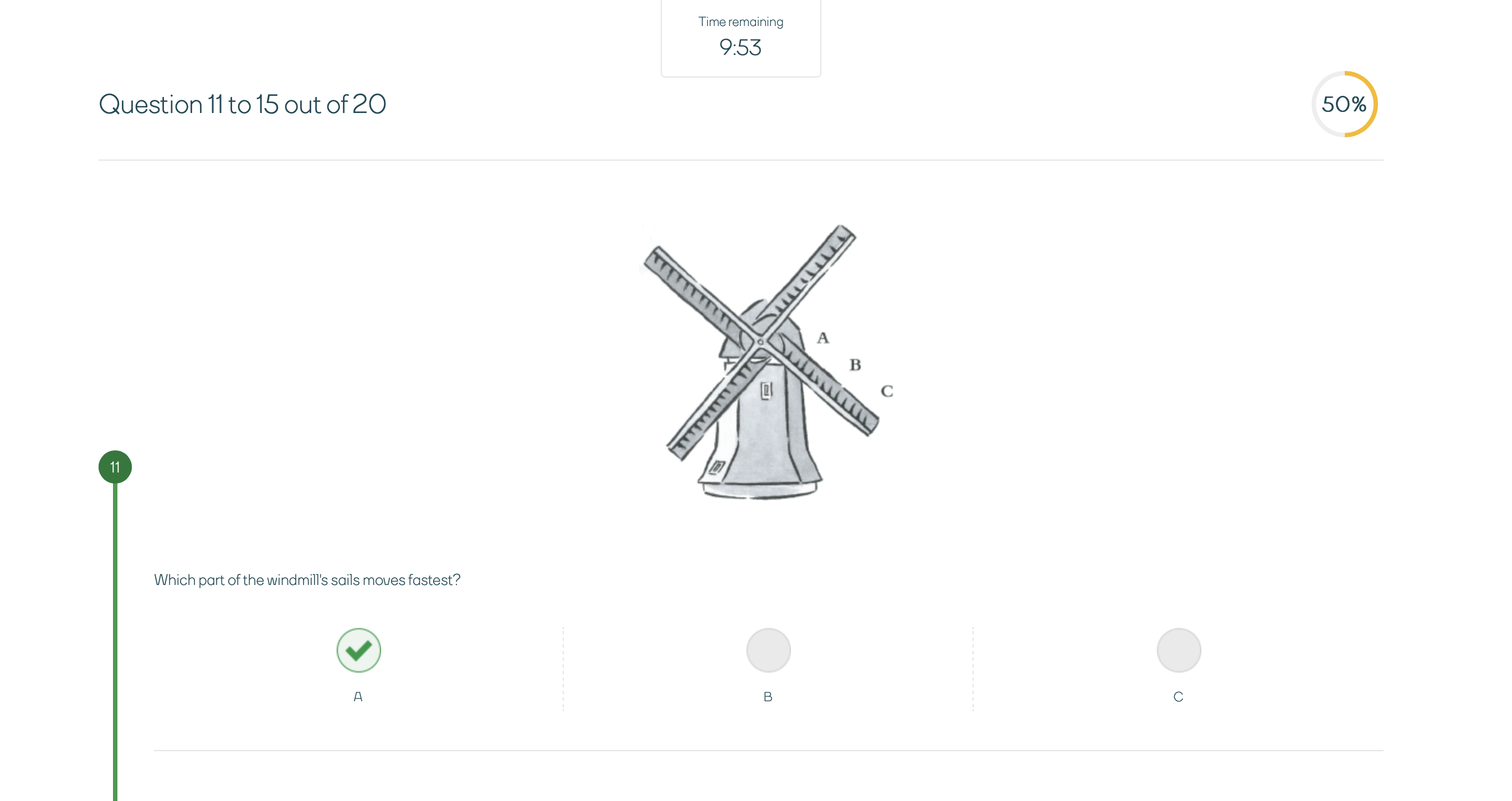

Mechanical

The mechanical ability test is for roles where candidates will need to work with and understand mechanical information or technical concepts.

People taking a mechanical reasoning test will need to show understanding of and be able to apply basic principles of physics to mechanical devices. Candidates will be presented with illustrations of mechanical processes such as gears, pulleys, levers and hydraulics and have to work out the correct answers.

Clevry’s suite of cognitive ability tests

Clevry’s cognitive ability tests come in 3 levels; Essential, Enhanced, and Expert, with each containing a number of psychometrically rigorous tests.

All of our tests can be mixed and matched to help business leaders tap into those skills critical to your organisation and delivering success within specific roles.

Production, manufacturing & engineering.

Essential: CWS

Tests available: Verbal ability, Numerical ability, Mechanical ability

Customer-facing roles.

Enhanced: B2C

Tests available: Verbal ability, Numerical ability, Abstract ability, Checking ability

Managers, professionals & graduates.

Expert: Utopia

Tests available: Verbal ability, Numerical ability, Abstract ability

Production, manufacturing & engineering.

Essential: CWS

Our suite of Essential tests were designed primarily for blue collar, manufacturing/warehouse environments and public utilities. Assessments in this suite are more suited to organisations which have a strong Production/Engineering focus. Within this industrial sector, these assessments are relevant to a range of role types such as:

- Hourly paid operatives

- Semi-skilled employees

- Apprentices

- Team leaders

- First level supervisor

Our suite of CWS tests have been designed to be appropriate across a broad range of difficulty levels. This means that they are suitable for the assessment of a range of individuals; from those who have no educational qualifications to those who have achieved A-levels or post-school Diplomas/Certificates. Candidates above this level (e.g. graduates) are likely to require tests which are different in nature, as well as difficulty, in order to reflect the responsibilities of the jobs for which they are being considered.

Customer-facing roles.

Enhanced: B2C

B2C Business Challenges has been developed for a range of applications. The assessments in this series are relevant to a range of occupational groups. They may be applied to various roles in which business administration skills are needed. This may include but is not limited to:

- Junior managers

- Customer service staff

- Call centre staff

- Sales people

- Administrators

- Personal assistants

- Secretarial assistants

B2C Ability Tests are particularly suited to situations in which employees are required to perform a wide range of tasks such as, attending to a customer and conducting various administrative duties. In such situations, employees are required to demonstrate both conscientiousness and reasoning skills.

The B2C tests have been designed to be appropriate across a broad range of difficulty levels. This means that they are suitable for the assessment of a range of individuals from those who have little or no educational qualifications to those who have achieved A-levels or post-school Diplomas/Certificates. Candidates above this educational level (e.g. graduates) are likely to require tests which are different in nature, as well as difficulty, in order to reflect the responsibilities of the jobs for which they are being considered.

The initial versions were developed based on job analysis, conducted in-house by our business psychologists. Trialling began with applicants to an administrative post, who varied in educational qualifications, age and experience. Based on this trialling the items were revised for difficulty level and length of the assessment, and it also determined the incorrect answer options for the numerical ability test.

The second phase of trialling was conducted with a large and varied sample of both applicants and job incumbents from a range of industries. These candidates varied widely in age, length of work experience, and educational qualifications. The trialling resulted in minor changes to the assessments and was the basis for reliability analysis of the items.

The final versions were then published, but have been subject to continual development and changes based on subsequent analysis.

Managers, professionals & graduates.

Expert: Utopia

The Utopia series consists of high level critical reasoning tests. They measure abilities which are particularly relevant to the performance of:

- Graduates

- Managers

- Professionals

- Specialists

- Leadership

The Utopia series has been designed to be appropriate for the assessment of individuals of graduate calibre. Candidates assessed using the instruments have typically achieved at least A-Levels or post-school Diplomas/Certificates.

How does a cognitive ability test work?

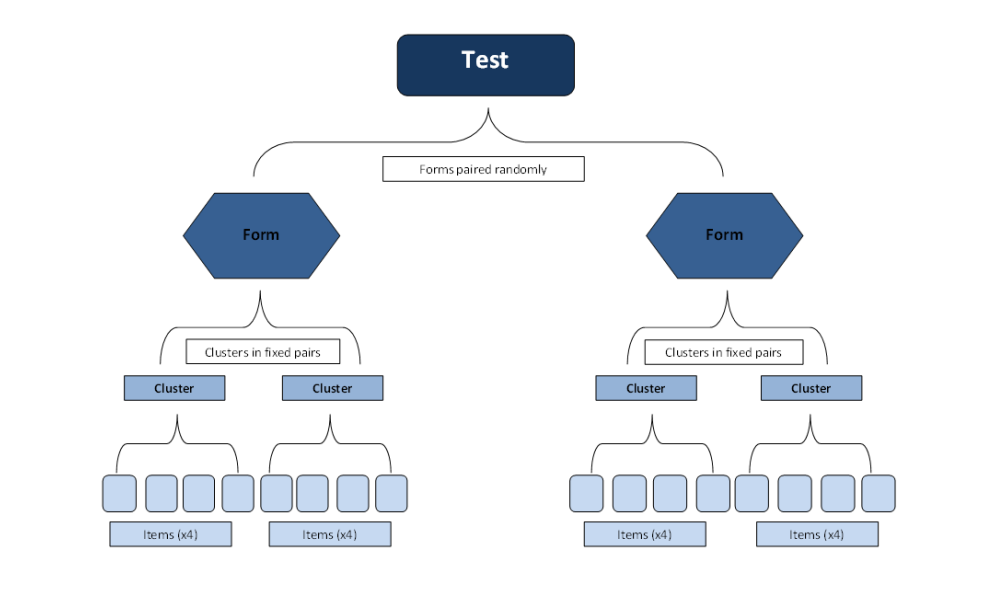

Cognitive ability tests on the Clevry platform work through the randomisation of what we call forms. Forms are essentially made up of clusters of different questions, known as an item bank.

The selection of forms is randomised, such that different candidates are presented with a different set of forms. The ordering of the questions within the forms is then also randomised. This ensures that different candidates are presented with different selections of questions at random, which helps to ensure the security of our assessments, and prevent behaviours like cheating and collusion.

This also allows us to match different sets of questions for difficulty to ensure that all candidates receive assessments which are of the same level of difficulty overall, even if individual clusters or items vary.

Developing ability tests for high-level candidates

The ability tests in our Utopia series are based around a common scenario to maximise their face validity and interest.

The initial items were developed in partnership with an investment banking firm, based on job analysis of their high level roles. This was conducted in house by our team of business psychologists.

While the first rendition of paper and pencil items were piloted and standardised with this sponsor, the items then underwent further development to ensure they were suitable for wider test use, and where relevant involved further general market research into specific forms of assessments.

Pre-trialling involved piloting the items on a group of graduates, who provided feedback on the tests from a candidates perspective, and by members of our consultancy team who provided feedback from a professional test publisher perspective. Some changes were made to the tests in order to make them more candidate centred.

Next, the trialling versions of the tests were trialled on a sample of graduate candidates applying for roles in a variety of sectors. In both the tests, items were selected for the final versions on the basis of their contribution to the psychometric reliability of the overall measure, and their level of difficulty. Items were removed which were either too easy or too difficult.

The scenario above describes the initial development phase for each series of ability test. Since this time the assessments have undergone further development based on continual use and analysis. For more specific information about the development of our ability tests contact us using the details at the bottom of this page.

Verifying cognitive ability test scores

Once your candidates have completed a cognitive ability test, you can request they complete a quick verification test to measure the extent to which their scores are similar to their first completion of the assessment. This can be used if you suspect some form of collusion or cheating, or simply would like to measure the candidates ability twice to gather more information.

Verification tests are a shorter version of the original ability test the candidate completed, using different questions. The results of this assessment are presented in the ability test report alongside other ability test results.

Assessing cognitive ability accessibly

Our assessments use a power test philosophy, meaning that candidates answer increasingly difficult questions, with fair time limits. This minimises demands on reading speeds and processing time, a common source of test bias on those who read or process more slowly than others (for example, candidates for whom English is not their first language, have visual conditions, or a form of dyslexia/dyspraxia).

Our assessments measure underlying abilities, uncontaminated by reading/processing speed.

We also comply with UK DDA requirements to ensure maximum accessibility for respondents. The candidate interface conforms to level Double-A of the W3C accessibility guidelines. This means that all fonts are resizable and are fully compatible for users with accessibility devices such as screen readers and refreshable Braille.

Users can also change the contrast of the screen and background colour of the assessments, and test timers can be adjusted for candidates who may need increased time limits.

Speed Test vs Power Test

Speed Test

In a speed test the method needed to reach the answer is often clear and the scope of the questions tends to be limited. As standalone items, the questions appear relatively simple.

The amount of correctly answered questions in a given period of time is the measure of ability in a speed test.

Power test

Compared to a speed test, a power test utilises a smaller number of more difficult items. The required method is not made clear and therefore the test is in the candidate’s ability to work out how to solve the problem.

Time limits on power tests tend to be generous.

What does a Cognitive Ability Test measure?

A Cognitive Ability Test measures the maximum performance of a candidate’s cognitive ability.

For example, the results from one of our tests show us how a candidate has performed, in comparison to a diverse group of previous test takers. This allows us to interpret the results in terms of what is typical within a given group, rather than just how many questions a candidate has gotten right.

How is a Cognitive Ability Test scored?

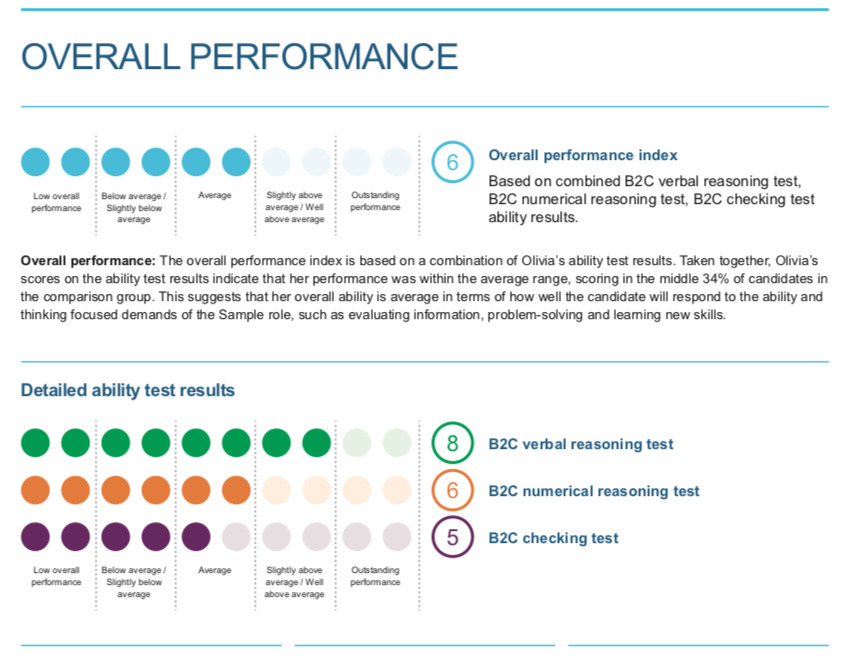

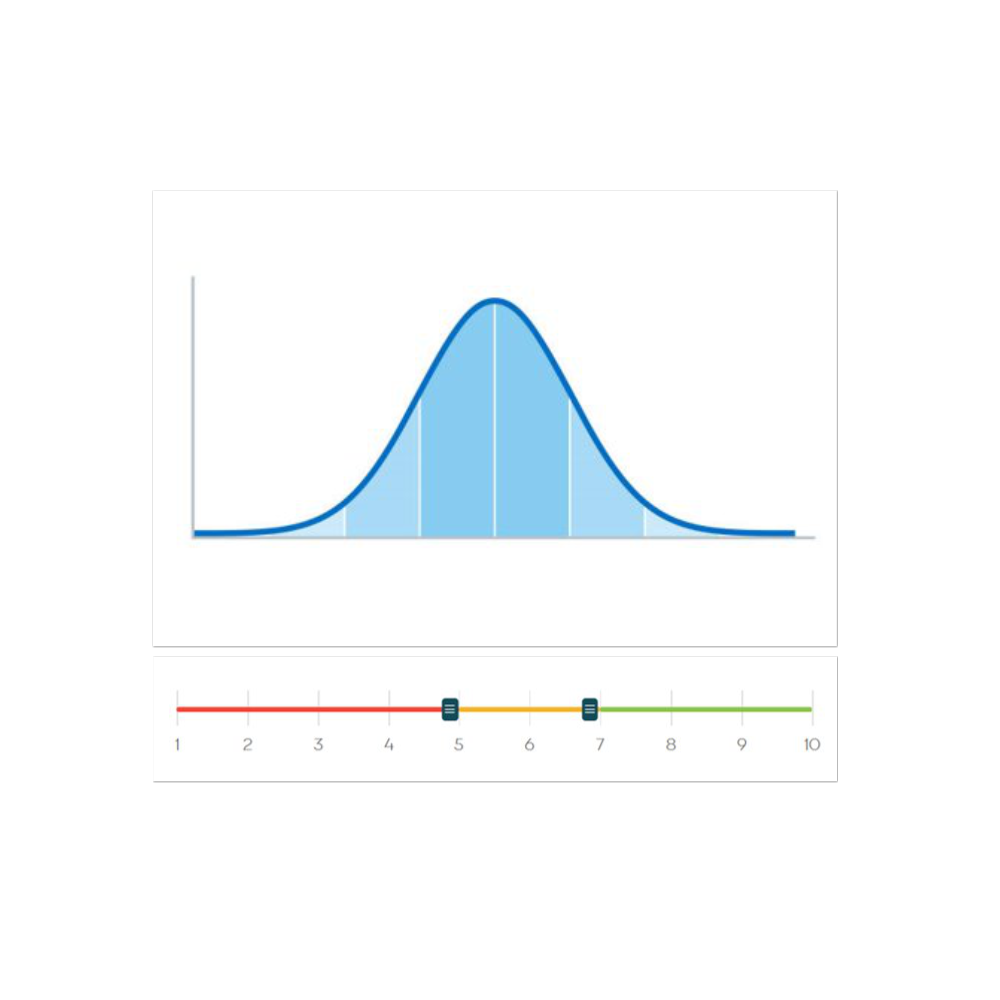

All Cognitive Ability Tests delivered through the Clevry assessment platform use norm referenced scoring.

This means that candidates scores are compared to a diverse group of individuals who have completed the same assessments in the past.

We therefore report a STEN score which has been standardised to show a candidate's ability, compared to a group of others. This is important for accurate interpretation of results.

For example, if you assess two candidates and one achieves a raw score of 14/15 and the other 12/15, it appears as if the first candidate has scored much better on the assessment, getting 13% more of the questions correct.

What is a STEN score?

Sten scores are a standardised scoring system which indicates where an individual’s score sits in relation to a relevant comparison population. Sten scores, standing for ‘Standard Ten’, as the name suggests, range from 1 to 10, with middling scores of 5-6 suggesting average ability, scores of 9-10 indicating high ability, and scores of 1-2 indicating low ability compared to the comparison group.

However, if by looking at the norm group, we know that the average score on the assessment is actually 4/15 then both of these candidates have performed outstandingly and it would therefore be reductive to compare them both on such a small difference in score.

Sten scores already take this comparison into account meaning that they aid more accurate interpretation.

Each cognitive ability test has its own norm group, as each assessment contains a different set of questions. This means that the make-up of each norm group will be slightly different even between assessments within the same test series (i.e. CWS, B2C, Utopia).

Why are cognitive ability tests timed?

Our cognitive ability tests are measures of power, rather than speed. This means that we provide candidates with generous time limits so that they have the opportunity to perform at their best, rather than measuring how quickly candidates can answer a series of questions.

Research has demonstrated more positive perceptions of the testing process when candidates sat power tests compared to speed tests. In line with our candidate is king philosophy, our tests use generous time limits to avoid adverse impacts due to excess anxiety, and promote a positive image for the clients that use our tests.

Furthermore, power tests can be argued to be fairer to candidates for whom the language of the assessment is a second language, since they are not forced to read and complete questions within a tight time limit. In order to measure maximum rather than typical performance of cognitive ability, even power assessments do still need to have a time limit. While the questions are designed to be challenging, with unlimited time most candidates would be able to work out the correct answers.

Time limits mean that we are measuring candidate's cognitive ability in a standardised way, looking at their ability to process information within a set time period. Furthermore, with no time limits at all we would also open the assessments up to huge amounts of potential cheating and collusion from candidates.

How are time limits set?

The time limits on our ability tests have been set based on the completion times of 100’s of previous candidates, during the trialling and development of the assessments.

Time limits are based on the average time taken to complete each form within an ability test meaning that time limits are not always numbers rounded to the nearest 5 or 10 minutes.

Our time limits are also reviewed in line with our regular assessment research and development of ability tests, headed up by our Science and Technology team. As our assessments have been designed to measure power rather than speed, candidate completion time isn’t considered when calculating sten scores. This information is available in ability test reports for your reference, along with the average completion times for that assessment.

The output:

Cognitive Ability Test Report

Our cognitive ability test report provides a comprehensive overview of a candidate’s performance on one or more cognitive ability test.

The report contains an overview of tests taken, including what they assess, how they are scored and guidelines on how to use the report; how to get the most out of it and appropriate interpretation of the results.

Ability test results are then explored in more detail and are linked to what their scores may suggest in terms of how effectively the candidate may deal with the demands of a role requiring those abilities measured by the assessment.